Introduction

The rapid advancements in large language models (LLMs), or more commonly simply referred to by many as ChatGPT, have transformed how we interact with technology. These powerful AI (Artificial Intelligence) systems have infiltrated our daily lives, assisting us with tasks ranging from creative writing to task automation.

However, it’s crucial to recognize that LLMs are not true artificial general intelligence (AGI) I wrote before; they are highly specialized tools requiring a unique skillset to leverage effectively. But some suggest that they rely more on memorization than possessing true reasoning capabilities.

Enter prompting – the art of crafting the right instructions and inputs to get the desired output from an LLM. Prompting is quickly emerging as a new essential skill in the age of LLMs, significantly enhancing these models’ utility and versatility. This article will delve into the essential skill of prompting and how it unlocks the potential of LLMs. Let’s jump into it!

Prompting as a New Skill

As LLMs become more prevalent in our lives, access to tools like Copilot at work is becoming increasingly common, augmenting many tedious tasks. Prompting involves providing an LLM with specific instructions or a starting point, a “prompt,” to guide the model in generating the desired output. This could range from a simple recipe request to complex instructions for refactoring code. My most favorite prompt is “Fix the grammar, flow, and readability if needed: [Your Text]”, which has saved me a lot of time, but more on that later.

Effective prompting hinges on clear and precise communication with the LLM. Understanding the model’s capabilities and limitations allows users to craft prompts eliciting the most relevant and useful responses. This is a learnable skill demanding practice, experimentation, and some understanding of LLM functionality.

Real-World Prompting Use Cases

Prompting applies to various day-to-day tasks, transforming how we approach challenges and opportunities. To give you a glimpse here are some practical examples that comes in handy:

-

Meal Planning and Recipe Generation: Instead of aimlessly browsing recipe websites, prompt an LLM: “Suggest a healthy, quick dinner recipe using chicken and carrots that my family will enjoy.” The model provides a tailored recipe considering ingredients, dietary preferences, and family tastes.

-

Explaining Complex Topics or Communications: Struggling with a dense technical article or a confusing email? Prompt the LLM: “Summarize the key points of this article in simple, easy-to-understand language.” The model quickly distills essential information for easier comprehension.

-

Evaluating and Editing Written Content: Prompting improves writing. Ask the LLM: “Analyze this article and provide feedback on its clarity, flow, and readability. Suggest any areas for improvement.” The model offers constructive criticism and suggestions for refinement.

-

Learning New Skills and Subjects: LLMs act as personalized tutors. Prompt the model: “Provide a step-by-step guide on how to get started with woodworking, including essential tools, safety tips, and beginner projects.” The LLM generates a tailored learning plan.

-

Coding Tasks: Prompting is a game-changer for developers. LLMs streamline programming tasks, from generating code snippets and refactoring existing code to creating detailed documentation. For example: “Refactor this function to improve its efficiency and readability.”

-

Extracting Information from Images/Videos: LLMs aren’t limited to text. Prompt them to analyze images or even videos (or their transcripts) and extract information. For a receipt: “List the key details from this receipt, including the total amount, date, and items purchased.”

-

Translating Complex Text: For foreign languages or technical jargon, prompt an LLM: “Translate this legal contract from French to English, ensuring the meaning and context are accurately conveyed” for nuanced and reliable results.

Be mindful that some of these use cases can be applied in various alternative scenarios. For instance, the scoring of articles can also be applied to score assignments or dissertations based on given criteria or your own. You can even take it a step further and request additional feedback, guidance, or suggestions for further improvements.

The Importance of “Prompt Engineering”

While prompt engineering is a rapidly evolving discipline, it encompasses all aspects of interacting with large language models (LLMs) to maximize the quality of their responses. Although the field delves into leveraging the differences between various models and developing efficient, task-specific prompts along with other advanced techniques, this discussion will focus solely on the basics. At its core, crafting effective prompts is an art form managed “prompt engineering.” In simple terms, this involves understanding the capabilities of LLMs and structuring prompts for clarity and specificity while providing concise context.

-

Clarity and Specificity: Vague prompts yield poor results. Provide clear instructions, relevant details, and constraints.

-

Relevant Context: LLMs thrive on context. Include details about the task, target audience, or preferences to improve output quality.

-

Experimentation: LLMs are sensitive to language variations. Try multiple prompt iterations to find what works best.

-

Evaluation and Iteration: Continuously evaluate responses and adjust prompts accordingly. This refinement unlocks the full potential of these AI tools.

So in the nutshell, by carefully crafting the prompt, you can guide the model to produce the specific format, style, and content that you’re looking for, while avoiding potential issues such as ambiguity or off-topic responses. Thus don’t be afraid to resend slightly changed prompt especially if you are not receiving the response you wanted.

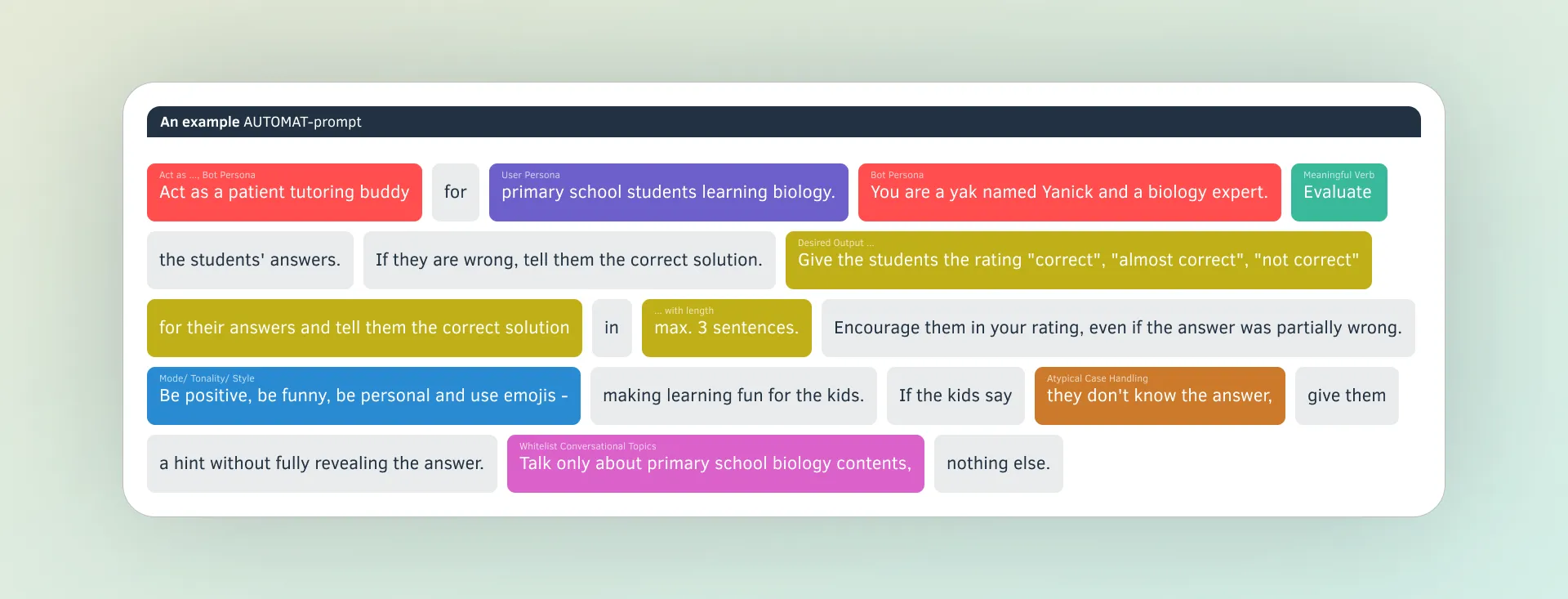

You can also follow more structured frameworks like these or advanced ones like CO-STAR or AUTOMAT.

For more guides check this meta-repository of multiple good resources.

Embracing Prompting as a Lifelong Skill

The use of tools like Microsoft Copilot at work is no longer just a hypothetical scenario, but a reality I’ve experienced firsthand. This AI-powered assistant’s ability to comprehend and navigate a company’s internal information, such as policies, documents, and emails, makes it a highly relevant and valuable tool for daily tasks.

In the age of LLMs, prompting is no longer a niche skill; it’s a fundamental competency transforming how we approach tasks and challenges. As LLMs continue to evolve and integrate into our lives, mastering the art of prompting is crucial for staying ahead.

Embrace prompting as a lifelong skill by exploring various use cases and experimenting with different techniques. Seek out resources and tutorials to hone your prompt engineering abilities, and stay informed about the latest advancements in LLMs to craft more effective prompts.

Prompting is not a one-size-fits-all solution. It’s a dynamic, iterative process that requires adaptability and a willingness to learn. While you can pick up the basics quickly, it’s worth consistently practicing and refining your prompting skills to unlock new levels of productivity, creativity, and problem-solving.

Conclusion

In the age of large language models, whether you use ChatGPT, Copilot, Claude, Gemini, Perplexity.ai or Pi.ai, the prompting has emerged as an essential skill. Mastering the art of crafting effective prompts harnesses the power of LLMs to streamline daily tasks, deepen understanding of complex topics, and unlock avenues for creativity and innovation.

As LLMs permeate our lives, embrace prompting as a lifelong learning journey, this tech will stay with us fo longer. Explore possibilities, experiment with techniques, and watch as your ability to collaborate with these powerful AI tools transforms how you approach challenges and seize opportunities. The future is here, and it’s time to master the art of prompting.

What are your biggest challenges when it comes to crafting effective prompts? Which LLM model do you use most often? Share your thoughts and experiences in the comments below!